Australia MVP

-

At Taxfix, I led the end-to-end design of a mobile-first MVP to test whether Australians would adopt and pay for a new tax solution. Within just four months and one tax season, the product exceeded payment targets by 217%, validating strong market demand.

-

217% of payment targets achieved. Conversion goals surpassed in the first half of the testing window. Users paid at higher price points than projected, revealing pricing elasticity the company didn't know it had.

These results didn't just validate Australia as a market. They established a repeatable model for how Taxfix tests new markets: lead with perceived value, validate willingness to pay, build infrastructure only after the signal is clear.

-

This project established a repeatable approach for testing new markets through design.

By redefining the MVP as a balance between experience and operational support, it enabled rapid experimentation without compromising user trust.

It also demonstrated how design can directly shape business validation, translating user behavior into measurable signals that informed strategic decisions.

Dive Deep

— The ProblemDespite having digital tax solutions, a large portion of Australians still relied on human accountants.

Users wanted to maximize their returns and felt uncertain that digital tools could deliver the same level of optimization as a human expert.

Key Challenges

-

Users valued human expertise and were skeptical of fully digital solutions.

— Trust X Automation

-

Australian users were highly optimization-driven, requiring transparency and reassurance.

— Market-specific Behavior

-

The product had to be designed, built, and launched within a short fixed tax season window.

— Time Constraints

. Design Process

. Design Process

Australian tax behavior is fundamentally different from European markets: users aren't looking for simplicity, they're looking for optimization. They want to know they're getting every dollar back, not just filing correctly. This made trust-building the primary design problem — not task completion. The experience had to feel like an expert, not a form.

Behavioral analysis

Instead of simply replicating the existing Taxfix product, the focus shifted to a different question:

How might we deliver a sense of optimization and trust within a lightweight, testable MVP?

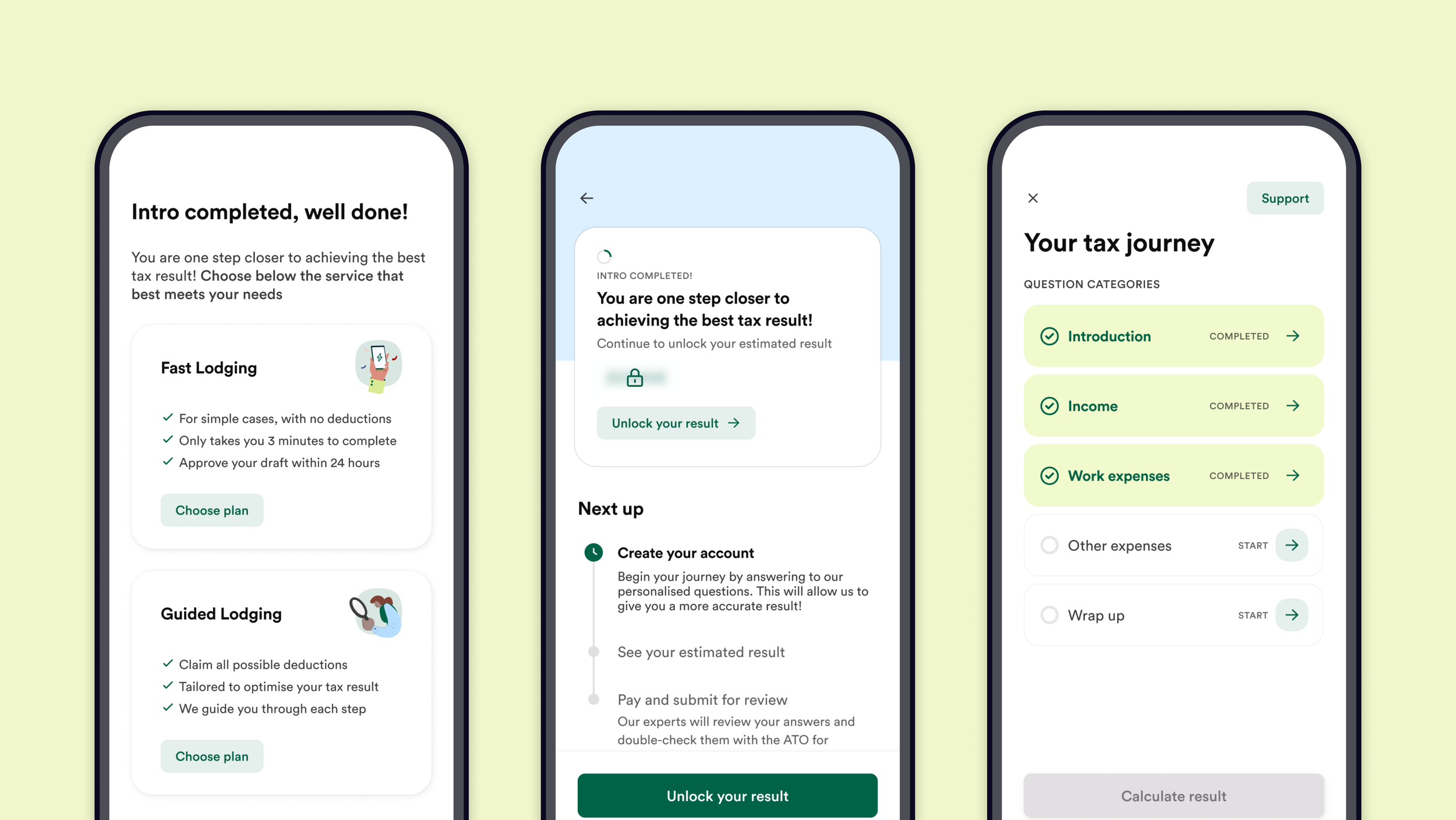

Key decision → prioritize perceived value and reassurance over operational completeness.

Opportunity framing

Instead of investing in full automation, the MVP used manual operations behind the scenes to simulate a complete product. This decoupled the user experience from engineering complexity, so we could test whether Australians trusted and paid for the product before committing to the infrastructure cost of building it for real.

Design became the instrument for de-risking a multi-market expansion decisions.

Defining a true MVP

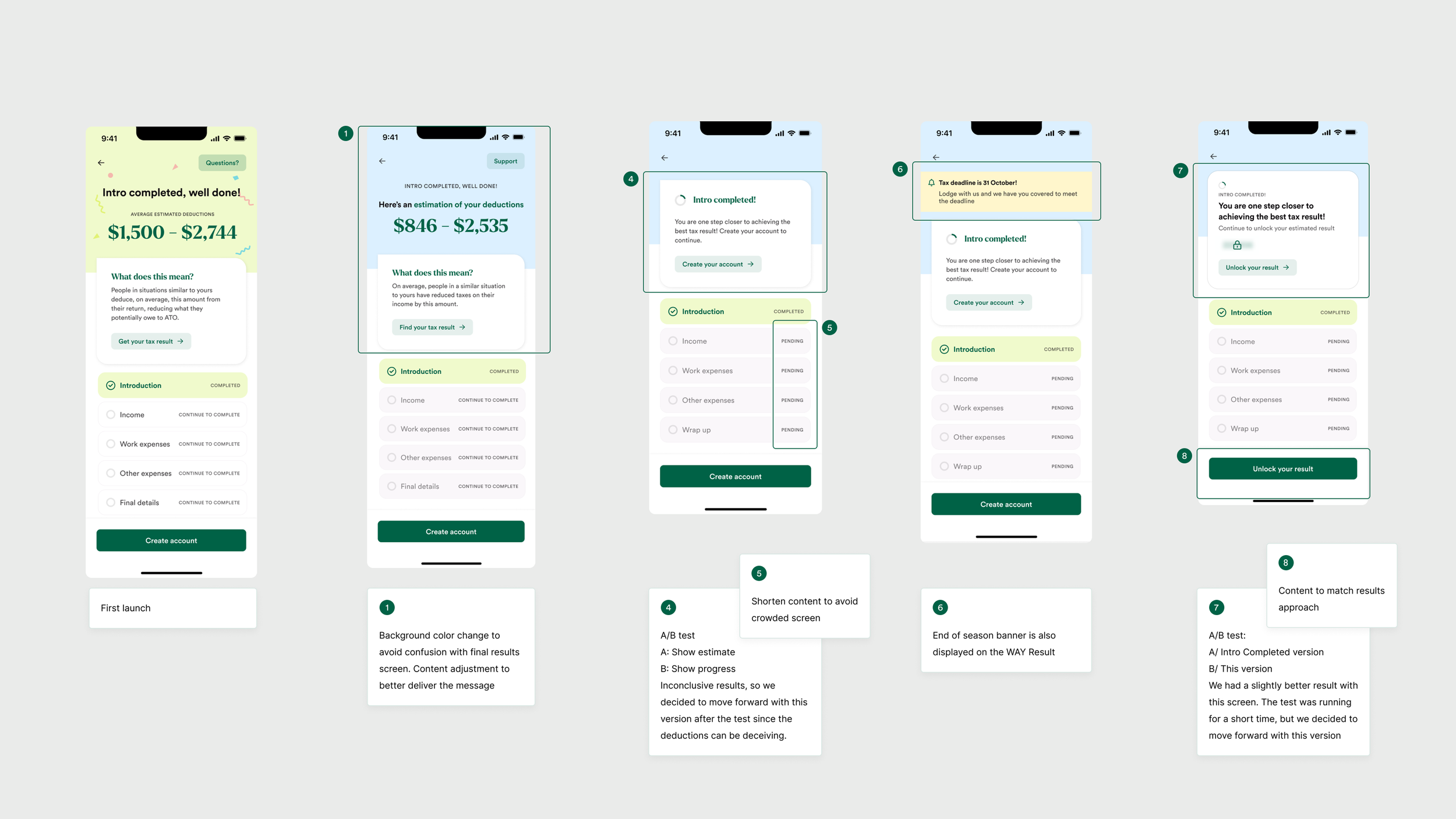

The MVP was launched and tested live for 4 months, with continuous iteration based on user behavior and data. We ran +70 iterations across content and messaging, pricing strategies, value communication and interaction patterns.

Validation and iterations

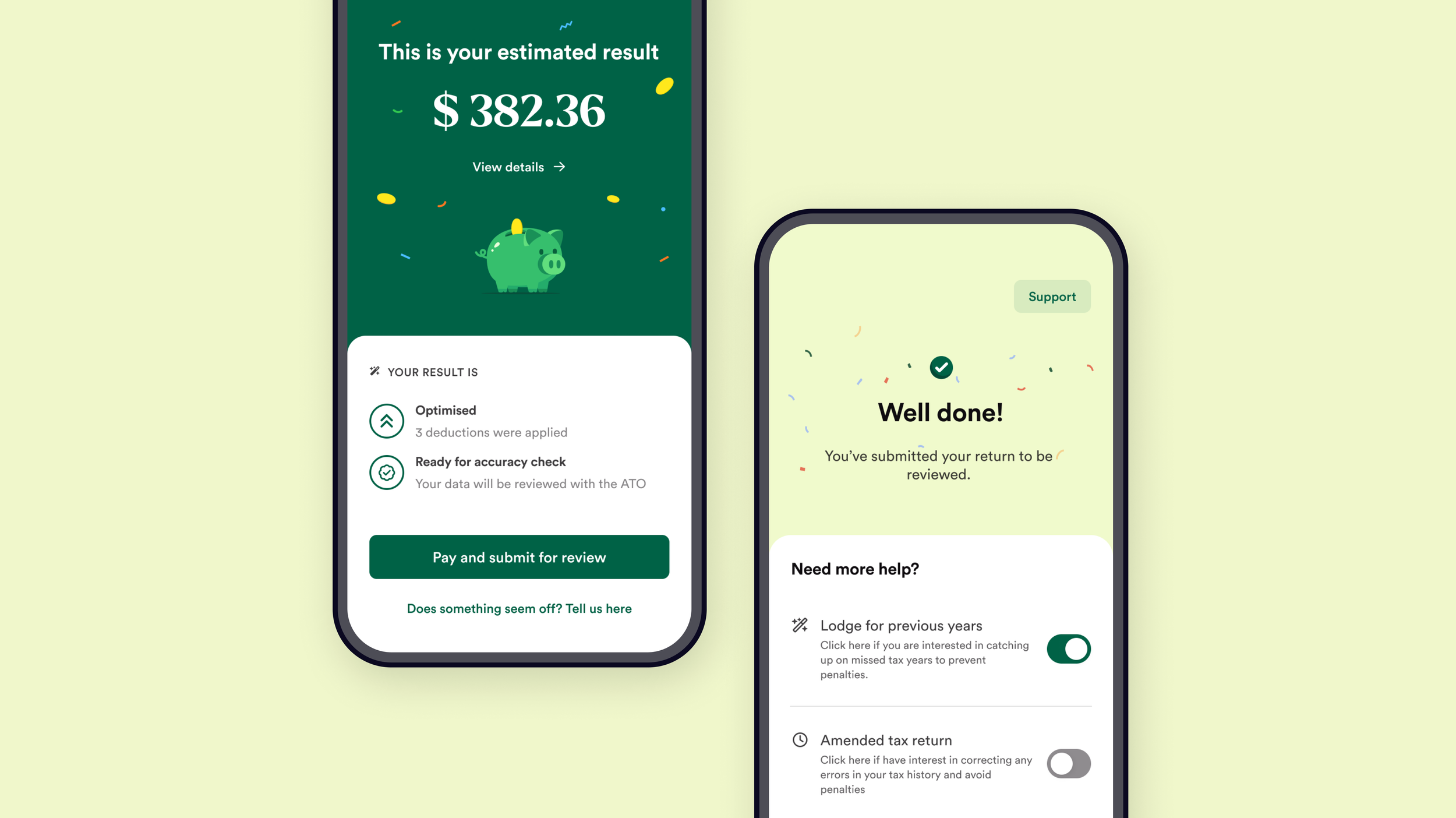

The final solution was designed as a lightweight but complete system that balances user trust, business validation, and operational feasibility.

— Key directions included:Emphasis on estimated returns early in the journey

Introducing moments of anticipation to drive engagement

Surfacing transparency around deductions and calculations

Guiding users through a structured but simple flow

Impact

On Business

217% of payment targets achieved. Conversion goals surpassed in the first half of the testing window. Users paid at higher price points than projected, revealing pricing elasticity the company didn't know it had.

These results didn't just validate Australia as a market. They established a repeatable model for how Taxfix tests new markets: lead with perceived value, validate willingness to pay, build infrastructure only after the signal is clear.

Design

This project established a repeatable approach for testing new markets through design.

By redefining the MVP as a balance between experience and operational support, it enabled rapid experimentation without compromising user trust.

It also demonstrated how design can directly shape business validation, translating user behavior into measurable signals that informed strategic decisions.